Custom Discord server part 2:

The Gateway

Hi there!

In this post I'll be taking a look at Discord's gateway, how the official client interacts with it and how we can simulate its behaviour.

- Part 1

- Part 2

A tale of two backends

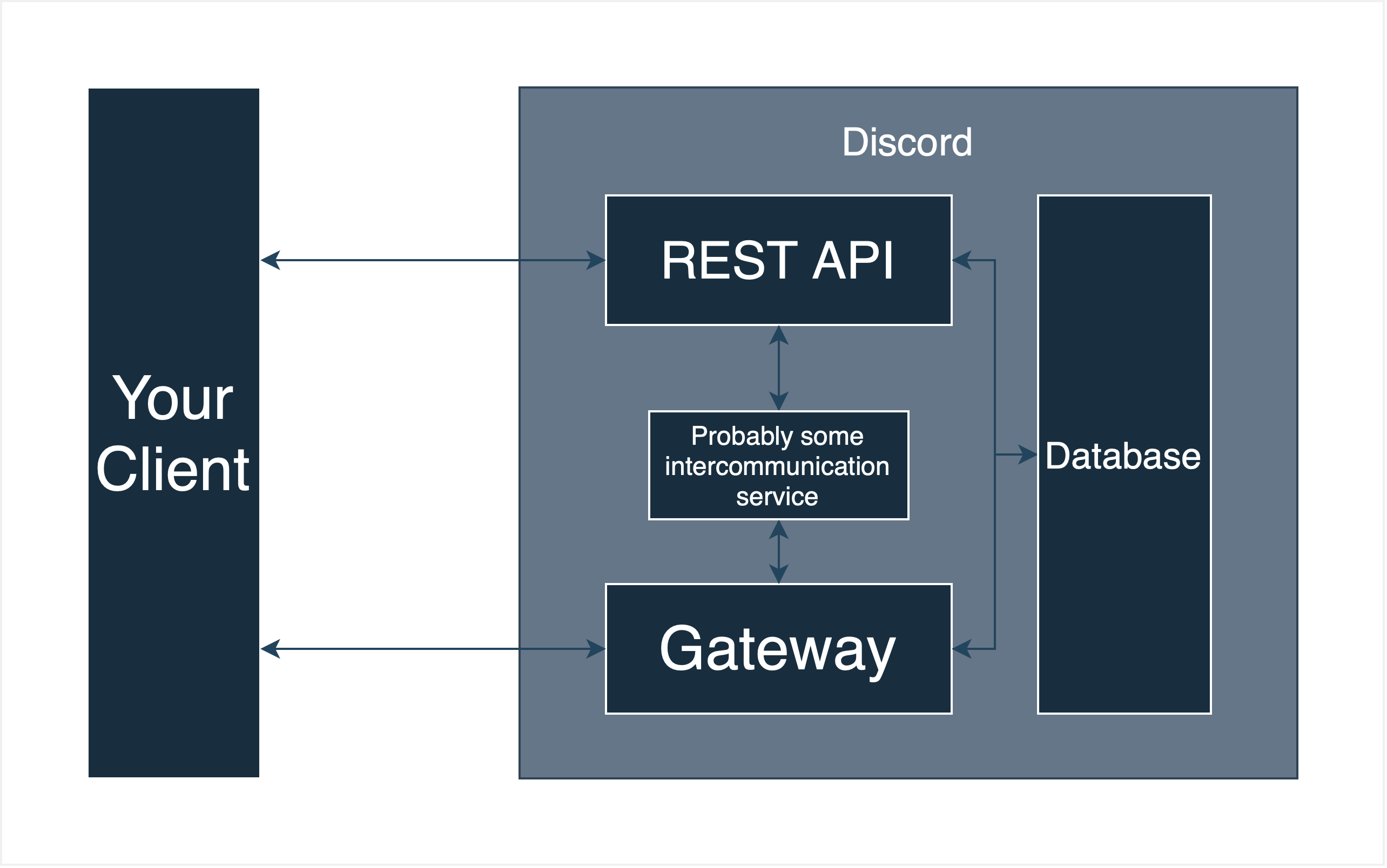

The first thing to know about the gateway is that, in the real world (as opposed to my little fantasy world here), it isn't hosted on the same servers as the REST API. This is probably for scaling reasons: while the REST API needs to handle frequent, short connections (HTTP requests), the gateway handles long-lasting connections (Websockets), that don't need as much throughput. Separating these two means being able to create different server instances for the different needs of each backend.

There are many ways to achieve such separation and communicate it to the outside world, but Discord chose probably the simplest. The REST API is hosted at discord.com, while the gateway resides at gateway.discord.gg. There is probably more routing action behind the discord.com domain to separate the landing page from the actual API, but overall this is the structure. I made a small diagram to illustrate:

TLS shenanigans, the second

For testing purposes I won't recreate this infrastructure, because having to deploy code to another computer just to test a bugfix is a true nightmare, but instead I'll just map both the domains to localhost and distribute connections from there. To do this I'll use Caddy, but any other HTTPs server with reverse proxy capabilities could be used. I just don't want to use nginx or Apache, because their configuration is global and I'd like to include a config file in my repository. The configuration is rather simple, but we do need to generate another TLS certificate, which, similar to the first one we generated, can be done like this:

$ openssl req -x509 -newkey rsa:4096 -sha256 -days 3650 \

-nodes -keyout key.gateway.pem -out cert.gateway.pem -subj "/CN=gateway.discord.gg" \

-addext "subjectAltName=DNS:gateway.discord.gg,DNS:*.gateway.discord.gg,IP:127.0.0.1"

With this done, we can configure Caddy to forward requests that ask for discord.com to one port and those that ask for gateway.discord.gg to another. This is the configuration file I used:

discord.com {

tls certs/cert.rest.pem certs/key.rest.pem

reverse_proxy https://127.0.0.1:4433

}

gateway.discord.gg {

tls certs/cert.gateway.pem certs/key.gateway.pem

reverse_proxy https://127.0.0.1:4434

}

Gateway goober

Now, until I got something up and running, I'll only run the gateway server, so I'll comment out the discord.com entry in my /etc/hosts in the meantime. Before I continue, however, let's take a look at how the gateway functions. At its core, the gateway is just a simple websocket server, that sends messages to the client. When a client first connects, it immediately receives its first message: a HELLO event. Events are the way Discord chose to structure its data. These events are encoded in either JSON or Erlang's External Term Format, for the Web and native clients respectively. The client can choose which one it wants to use when creating the websocket connection using the encoding parameter

After the server sends the HELLO event, it expects the client to send an IDENTIFY payload. It contains information about the client, but most importantly its authentication token. Currently, we don't care about any of that. We'll just send back the READY event, concluding the handshake that Discord, in their infinite wisdom, thought up. After this the client will continue to periodically send HEARTBEAT payloads that should be answered with a HEARTBEAT ACK by the server. Discord made a handy diagram about the lifecycle of a gateway connection, which you can find here.

The web to my socket

My first goal will be to get the client to actually start up, i.e. get past the loading screen and also stay there, so the implementation needs to perform the initial handshake and heartbeating as well. Even though I split the gateway into its own binary, I'm still using warp, because it already supports Websockets and my target/ folder is already ~2,7 GiB large. Accepting Websocket connection is fairly simple and from the initial request we can already see that the official client uses ETF for gateway encoding. You knew that already, because I told you two paragraphs ago, but for me this was new.

This is also kind of a problem, because while Rust does have a crate to encode and decode ETF, the serde module for it is pretty outdated. No matter, I'll just update it myself. Discord has some specific requirements to its ETF messages, like encoding strings as byte arrays, but these are easily implemented. The hard part in receiving messages from the client is creating a Rust struct or rather an enum out of them. While I do have serde to assist me, I still have to tell it which enum variant to deserialise. Luckily twilight, which I'm already using for a part of Discord's models, already has a deserializer that does exactly that. I just have to adapt it to also work with ETF, because the original version is JSON-only.

What does the gateway say

Sending the first HELLO payload is fairly easy. It only has one field, the heartbeat interval, and is already defined in twilight. Parsing the IDENTIFY is a bit more complicated. The one that bots have to send and that is documented is quite different from what the official client sends. For example it also includes information about the user-agent and client build, while omitting the intents field. You can find the exact structure here. I don't really need any of the information in this message, but for shits and giggles I'll parse it anyways, which is also useful for the future, when we have to distinguish between IDENTIFY and RESUME payloads.

Just answering with the documented READY payload does not work: we'll actually have to do some minor reverse engineering. Taking a look at the payloads the client receives from the official gateway isn't super simple, but with a mix of mitmproxy, Firefox's dev tools and a simple Python script to decode the zlib stream I can see what they're sending each other. Surprise, surprise, it's vastly different from what bots receive. Probably the biggest difference is the way guilds (servers) are handled. If you have experience with Discord's bot API you know that the initial READY only includes partial information about the guilds you're in, specifically its ID and whether it's unavailable. Later more information is sent via the GUILD_CREATE payload. Actual user clients, on the other hand, receive all information about the guilds they're in one big event. This makes sense: while normal users can only be in up to 200 guilds, popular bots like Mee6 could join millions of them, so payloads could grow into the gigabytes (via JSON, one guild ~ 10kb, assuming 1 million guilds, disregarding sharding and compression, ~ 10gb). You can find details on the READY payload here.

The sacred zlib stream

Speaking of compression; let's take a look at how Discord compresses their message. At first glance it's pretty simple. Just run your events through zlib and off they go. However, trying that won't work. Looking at what the web client prints to the console us we can see that somehow our compression is off:

Error: zlib error, -3, invalid stored block lengthsTaking a closer look at how the client is supposed to receive payloads, it maintains a single zlib context and decompresses all its messages with it. As it turns out the compression algorithm zlib uses, DEFLATE, internally uses a tree to keep track of encoded symbols (this is a vast over-simplification) and as trees do, it can grow with each time it is used. So while thus far the client has kept a single tree and tried to match all the bits we send to it, we have been generating a tree for each individual message. While in other languages this would probably be an easy fix (see zlib.compressobj in Python), Rust's flate2 seemingly doesn't want you to keep one zlib context for all your messages and its Compress struct needs some extra work to be easily usable with variably sized arrays. Luckily, the twilight-gateway crate has already done this work for the decompression direction, so with some minor tweaks I was able to send messages the client actually understands.

Back to events

The READY payload isn't the only payload the client expects from the gateway. I don't know exactly why, but Discord chose to split the READY data into two payloads: READY and, wait for it, READY_SUPPLEMENTAL. The READY_SUPPLEMENTAL payload includes extra data on things like guilds and game invites and you can look at it here.

Sending these two already gets the client past the loading screen, onto the friends page, but it won't stay there for long. Discord uses a simple heartbeating protocol to keep gateway sessions alive when there isn't a lot of traffic going though them otherwise. Back in the HELLO event we told the client how often it has to send us a HEARTBEAT, and now we should handle it. Otherwise the connection will time out. Doing so is fairly straight forward; we just have to send back a HEARTBEAT ACK and we're done.

And that leaves us with a running Discord client. I already implemented some minor details like the SESSIONS_REPLACE event which the official gateway sends after the handshake, but isn't required for the client to start up and parsing some messages the client sends to the gateway. The next steps will be to write blanket implementations for all the REST endpoints, and then we'll take a look at backing those up with an actual data base, although that will take some time. Until then:

Farewell!